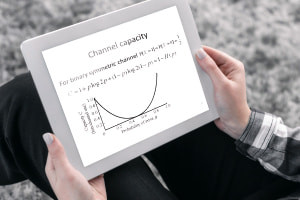

The fundamentals of channel coding and capacity are the core components of this course. It begins by explaining the importance of channel codes in information theory. You will discover how these coding schemes look after the data from being tainted in the communication channel, including the method of conveying accurate information in a noise-degraded communication channel. Next, you study the concepts of ‘mapping’ and ‘inverse mapping’ of the input-output data sequences. You look at the role of transmitters and decoders in the mapping process for eliminating channel noise. Following this, the proof of Shannon’s channel coding theorem for the binary symmetric channel is explained. Discover the implication of sphere-packing bound in allowing the error-amending codes to use the embedded code words. The process of restraining the factors of a random block code is described. The course explores the core parameters required for a code to be deemed perfect in terms of its representation.

Next, it explains the procedure for resolving the inaccuracy for a given code using the random coding bound method. You will see how the bound is established based on the mean probability error over a collection of codes. In addition, the significance of random coding in demonstrating the parts of achievability in the network information theory is explained. Next, the processes of extending the proof of the Shannon coding theorem for other general channels are analyzed. You will discover the importance of strong and weak converse in transmitting error-correcting codes to various communication channels. Following this, the concept of using the Gaussian channels in channel capacity is highlighted and you explore the extension process of these channels to an uninterrupted signal channel with a measure of various bandwidths. Next, you delve into the methods of associating transmission systems with Shannon limits. The roles of time-discrete channels in modelling other communication channels are also described.

Finally, the course illustrates the process of deriving the channel coding theorem in the Gaussian channel. You will explore the converse and achievability proofs of the theorem. Next, study the various kinds of parallel channels used in the channel capacity. The notions of orthogonal frequency-division multiplexing (OFDM), multiple-input-multiple-output (MIMO) and discrete multi-tone systems (DSL) are clarified. You also master the significance of the parallel independent channels in increasing the total capacity by dispensing power across various channels. Lastly, study the need for performing equalization approaches on communication channels. You will discover the implications of water-filling algorithms in enhancing the number of data rates in all sub-channels by assigning maximum power with a healthier signal-to-noise ratio (SNR). ‘Understanding Channel Coding and Capacity in Information Theory’ is an enlightening course that explores the basics of coding for dependable transmission over noisy channels. Start learning about the process of communicating precise information with minimum probability of error by registering for free, today.

What You Will Learn In This Free Course

View All Learning Outcomes View Less All Alison courses are free to enrol, study, and complete. To successfully complete this Certificate course and become an Alison Graduate, you need to achieve 80% or higher in each course assessment.

Once you have completed this Certificate course, you have the option to acquire an official Certificate, which is a great way to share your achievement with the world.

Your Alison certificate is:

- Ideal for sharing with potential employers.

- Great for your CV, professional social media profiles, and job applications.

- An indication of your commitment to continuously learn, upskill, and achieve high results.

- An incentive for you to continue empowering yourself through lifelong learning.

Alison offers 2 types of Certificate for completed Certificate courses:

- Digital Certificate: a downloadable Certificate in PDF format immediately available to you when you complete your purchase.

- Physical Certificate: a physical version of your officially branded and security-marked Certificate

All Certificate are available to purchase through the Alison Shop. For more information on purchasing Alison Certificate, please visit our FAQs. If you decide not to purchase your Alison Certificate, you can still demonstrate your achievement by sharing your Learner Record or Learner Achievement Verification, both of which are accessible from your Account Settings.

Total XP:

Total XP:

Knowledge & Skills You Will Learn

Knowledge & Skills You Will Learn